OpenAI has released a new version of its image-generation system, now branded as Images 2.0, rolling it out across ChatGPT, Codex and its developer API. It marks a substantial progress, not just in output quality, but in how the system approaches the task itself.

Earlier image models, including OpenAI’s own, have tended to fall apart in predictable ways: dense blocks of text would come out garbled, spatial relationships between objects would drift, and anything tied to current events was often unreliable. Images 2.0 attempts to tackle all three, with mixed but noticeable progress.

The headline feature is what OpenAI calls “thinking”. In practice, this means the model doesn’t jump straight to generating an image. Instead, it works through the prompt in stages, closer to how newer reasoning models handle text. When this mode is switched on, the system can pull in real-time information, generate multiple variations in one go, and keep characters or visual styles consistent across them. It can also check its own output before returning a final result — a small but meaningful change from the usual one-shot approach.

That shift is important. Image generation has largely been a cycle of trial and error: generate, inspect, tweak the prompt, repeat. If the model can do some of that iteration internally, it changes the experience — even if it adds a bit of time.

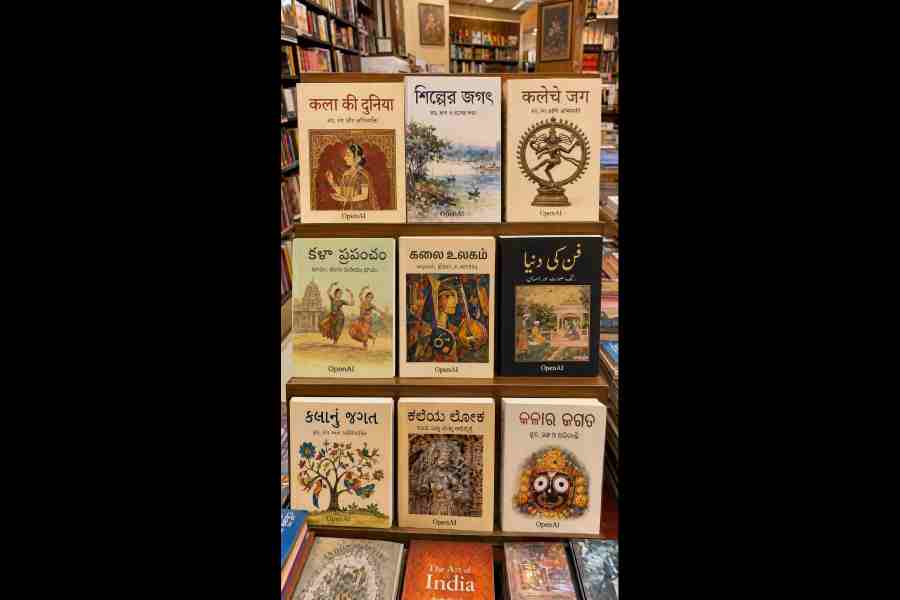

The tool has a better multilingual understanding of Bengali, Hindi, Japanese, Korean and Chinese. Picture: OpenAI

Generating something more involved, like a comic strip or a set of design mock-ups, can still take a few minutes, but the trade-off feels reasonable given the complexity.

Text rendering, long a weak spot for image models, also seems to have improved. The underlying issue has been technical: diffusion models aren’t built to “spell” in any reliable sense. Newer approaches, including autoregressive techniques, are better suited to handling language within images. The gains here are particularly visible in non-Latin scripts such as Hindi, Bengali, Japanese, Korean and Chinese, where earlier systems often struggled. It’s not just about correct characters — it’s about making the text look like it belongs in the image.

There are more incremental upgrades elsewhere. The model supports outputs up to 2K resolution, handles a broader range of aspect ratios, and does a better job of placing objects where they’re supposed to be — useful for things like diagrams or interface mock-ups where layout is doing a lot of the work.

Stylistically, too, it’s more reliable. Whether it’s pixel art, manga panels or something closer to a cinematic still, the model is better at holding onto the defining traits of a style instead of drifting into something generic.

All of this comes at a time when competition in image generation is picking up. OpenAI, for its part, is marketing Images 2.0 as more than just a tool for making pictures. The idea is a system that can take a rough prompt, gather context, make decisions, and deliver something closer to a finished output... without requiring constant back-and-forth from the user.

Images 2.0 is available now across ChatGPT and Codex, with the more advanced “thinking” features reserved for paid tiers. API access is live as well, with pricing tied to output quality and resolution.