It’s always Day One at Amazon. We want Alexa in India to continuously get smarter and more useful for our customers. And that has been happening by Alexa learning new skills everyday. How do we think of Day One? It’s ultimately the fact that customers always want something better from any service or product we have for them,” said Rohit Prasad, vice-president and head scientist, Alexa AI, Amazon, while addressing the audience at Alexa VOXCON, the company’s conference on all things voice in Delhi last week.

Few people understand Alexa better than Rohit, who grew up in Ranchi and now lives in the US. Now in his 40s, the man spent his childhood years glued to the TV watching Star Trek episodes on TV, mesmerised by the way computers and humans communicated on the show. His story didn’t stop there as The Telegraph found out.

The India story

Alexa has been working great in Indian English for our customers. But what we are also seeing is that adoption is increasing in a big way. We have found that as Alexa-enabled devices have grown, so has its relevance to India. Customers are happy to access content like music just through voice, something that was unthinkable even five years ago when you had to pick up your phone and play music. Voice has made things easier. We are not slowing down. Alexa is becoming more and more ubiquitous. Another great surprise has been smart homes. When we launched Alexa in the US, the smart home was an emerging concept. In India, what we find is that customers buy an Alexa device and a smart device together. That’s again a great testimony to how this area is emerging and working. In the last 12 months we have seen three times increase in the number of smart home users.

Contextually relevant content

Every country, to Alexa and our AI team, is a huge challenge. Our goal is for Alexa to be a native citizen in a country, which means Alexa should really learn regional accents, words and cultural nuances. When you are in another country, you should have the whole selection of content customised to that country. We have a great selection of partners in India who have helped us.

For example, there is a unique way customers select music in India. In the US, for instance, music is consumed by the title of a song or the artiste. In India, we are huge fans of Bollywood. People ask for Shah Rukh Khan songs. Another unique thing, there are many festivals. For instance, I grew up enjoying Diwali. It is quite common to have a playlist specific for every festival. We have done that... come up with contextually relevant content. In India we have an insatiable thirst for knowledge. And education is a big domain. Education-related questions are also dominating traffic.

Once we launched Alexa Skills Kit, it democratised how conversational AI is used to build new functionalities in services like Alexa. Alexa has 90,000 skills worldwide. And there are 30,000 skills specific to India.

Let’s talk in Hindi

Alexa now not only understands Hindi, it responds in Hindi too. What took us so long? Customers were, in fact, speaking to Alexa in Hindi before it even understood it. This was made possible with Cleo skills (it’s a skill designed to improve Alexa’s understanding of local languages), which ended up collecting different variants of how customers would like Alexa to understand Hindi. For example, there are a lot of artiste names that are very Hindi, Kannada or Telugu-centric. We already have that knowledge.

Not just Hindi, Alexa also understands Hinglish. When I was growing up in India, my interactions with friends were Hinglish-centric. So Alexa had to be taught to understand two languages being used in the same sentence. There was another challenge: as Hindi is not written in Latin script, Alexa had to understand Devanagari as a script.

We also have to remember that language and personality go hand in hand. If I ask a playful question, Alexa’s response needs to be a playful one. And we realise that many Indian households are multilingual. I speak English but my mother prefers to interact with Alexa in Hindi. We don’t want to be in a situation where one has to switch (settings) between languages all the time. Coming soon, such households will be able to interact seamlessly with Alexa.

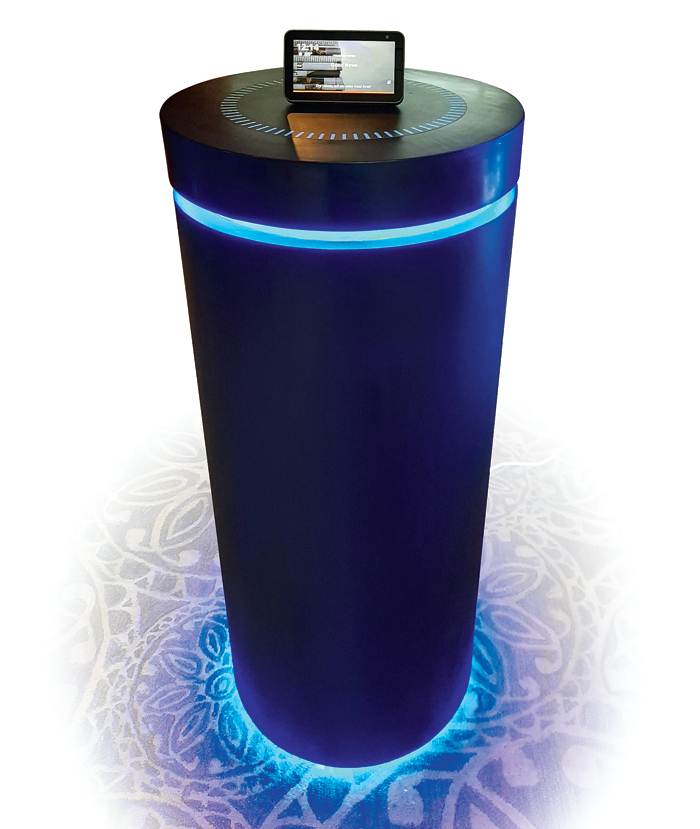

An Echo installation at Alexa VOXCON, the company’s conference in India dedicated to all things voice, in Delhi last week. Picture: The Telegraph

Adding to Alexa’s story in India is Parag Gupta, head of devices, Amazon India. The Telegraph caught up with him.

The journey that started with Indian English

We rely a lot on the voice of the customer. Indian English in itself is a different language. It is very different from US or Australian English. Pronunciations and contexts are different. We asked ourselves what would people talk about. People would talk about music, about movies, about cities, about festivals... from Day One Alexa could understand a movie title in Tamil, tell a Rajinikanth joke and could understand words like Thiruvahindrapuram and Jalandhar. The big question was can Alexa speak back in Hindi and could a person have a conversation with her? We also asked if Alexa could speak Hinglish. Cleo helped. What happens in Cleo is like a child learning a language. The more it hears and gets a context to those words, the quicker it learns. Alexa is in the Cloud and Cleo helped it learn a lot of Hindi words and also words in other languages. A challenge we have faced with every language is dialects. Now if you tell Alexa “Rabindranath Tagore” she would understand it as much as if you say “Robindronath Thakur”. As more and more people interact with Alexa, her pronunciation and context skills will improve. The same journey will happen with Hindi.

Lessons from India

Hinglish is probably the first mixed-language challenge on such a huge scale that we have undertaken. Whatever lessons we have learnt here and continue to learn, would be applicable to other language models. Rohit (Prasad) is right when he said that by solving so many problems in India, we could transport a lot of those solutions — both technical and operational — to other locales. I can immediately think of English and Spanish in the US or English and French in Canada.

Beyond Hindi

I can’t put a deadline or talk about a roadmap. It is a learning process. I would say it’s already happening. You can ask Alexa to play a Tamil song or ask for a Marathi movie. But to have a full conversation, complete with contexts, that process will take time. AI and ML will have to catch up.

Alexa in Indian cars

Can that happen? Yes, it is happening in the US where Alexa is built into Ford cars. We also have a device called Echo Auto, which essentially allows you to retrofit your car with Alexa. From a vision perspective, I don’t see any problem. But then the challenge is customer experience. Our road conditions are different, how many people often travel in a car is different (in India), the noises are different. You have to solve that first.

Machine learning can solve real-world problems

Alexa’s Whisper Mode is an interesting feature that’s available in the US. It helps especially if you want to strike up a conversation with Alexa while your kid sleeps nearby. It uses a neural network to distinguish between normal and whispered words, which can be a challenge as whispered speech is mainly unvoiced, that is, it doesn’t involve the vibration of the vocal cords and “tends to have less energy in lower frequency bands than ordinary speech”.

Alexa Guard is another feature that’s available in the US. Say you have left the house and you want Alexa to tell you about intruders. Glass breaking is a good indicator of intrusion and Alexa has the knowledge to pick up such sounds. You have to opt for the feature. With far-field microphones in every Echo device, Alexa is a good listener. The feature puts those mics to use by listening for the sound of glass breaking or the sound of alarms when you aren’t home.